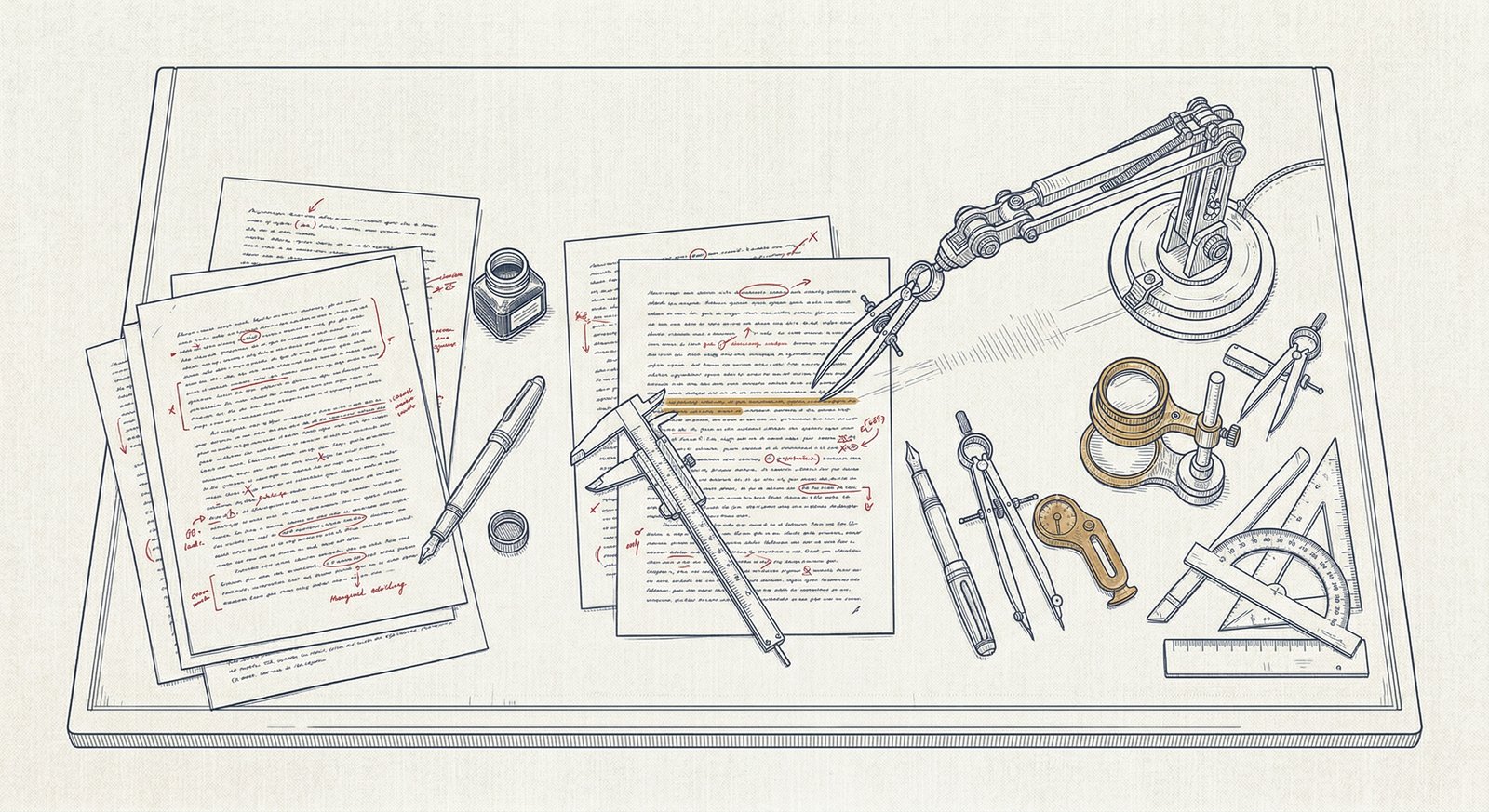

My AI editor is essential to my writing flow and has made me a stronger and more consistent writer. I get a lot of questions about my setup, so I’m going to talk about how I think about the role of AI, how I set up my editing workflow, and how to set up your own editor. Not sure if that would be useful to you? The final section of this post is the feedback Claude gave me on my first draft, so you can assess for yourself.

Here’s the critical thing about using an AI editor: the only way to get useful feedback from AI is to give it extremely detailed instructions about what you want your writing to look like. If you just ask “how do I make this better?”, you’ll get advice on turning your writing into mediocre slop. The more effort you put into understanding your own style, the better the feedback you’ll get. Even if you decide not to use an AI editor, I recommend that you invest the effort into writing a detailed style guide—I found the process very helpful for figuring out what I want to accomplish as a writer.

I don’t ever let AI write for me. I’m not precious about that, but as of April 2026, AI just doesn’t write as well as I do—and the difference matters to me. But with the right guidance, it does a great job of helping me consistently write in my chosen style.

I prefer to use Claude Opus 4.6, but the paid tier of any frontier model should work fine.

Getting started

For my first pass, I had Claude conduct a detailed interview with me, asking about why I write, who I write for, what writers I want to sound like, and much more. It also read my past work to get a sense for what I currently sound like. We talked at length about what I like about my writing and what I want to improve. After all that, it wrote a detailed style guide describing the ideal version of my writing.

The AI-written version of the style guide worked well, but I’m rewriting it from scratch based on my experience with the first one. I’ve found it very helpful to have Claude review each section and give me feedback on specifically whether it includes the information Claude needs to make good editing decisions.

A typical editing session begins with me opening a new session in Cowork, giving it access to the directory with all my writing, and asking something like:

I’d like you to take a look at the first draft of a new piece I’m writing about whether programmers will have jobs in the future. Please read my style guide and use that to guide your feedback. For this piece, I’m particularly struggling with how much I should explain to my readers about what programmers actually do—I’d like your thoughts on whether that part is correctly calibrated.

I’m going to walk through the new version of my style guide, offering specific thoughts about what I included and why some things are written the way they are. If you find it useful, you’re welcome to use it as inspiration, but don’t just copy my style guide wholesale. If you do that, you will end up sounding just like me, and nobody wants that.

If you like my style guide, I recommend giving it to your AI during the initial interview process and asking it to make you something similar, but customized for your writing style and voice.

Introduction

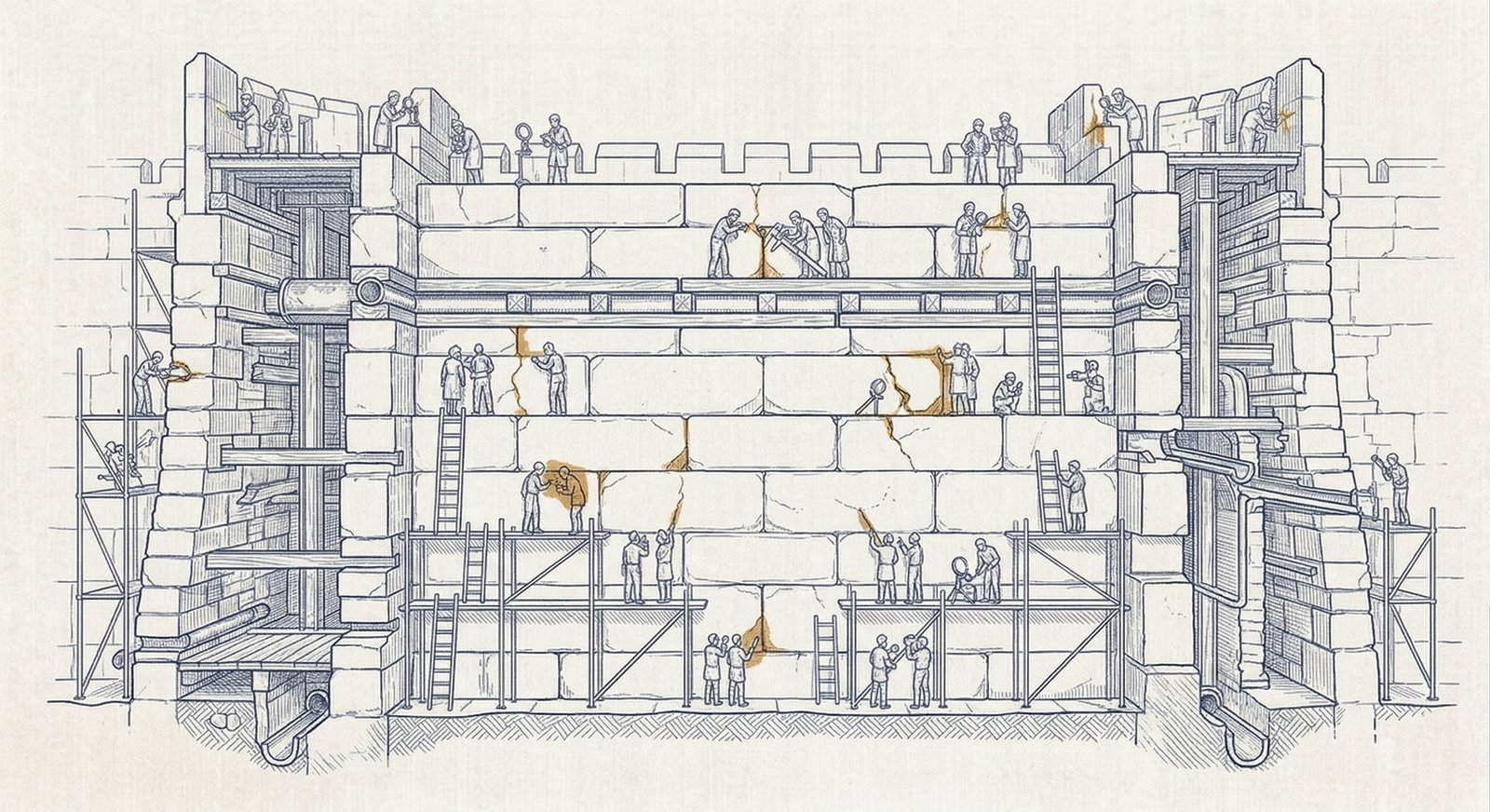

This guide documents your role as my editor for Against Moloch. Your job is to help me write the kinds of pieces I want to write, in the way I want to write them. You should:

- Offer advice on whether pieces are interesting, accurate, relevant, fair, insightful, and well-targeted.

- Steer me toward writing in my chosen style and voice.

- Catch grammar and spelling mistakes.

- Make sure the technical format is correct.

You should never directly edit any of my pieces, or do my writing for me. When making suggestions about edits, never suggest more than a single sentence at a time. Your role is to advise me on what to do, but not to do it.

I don’t (yet) want AI to write for me. I find that if Claude recommends an alternate version of something I wrote, I will tend to subconsciously copy what it wrote—and I don’t want that. The only time I put AI-generated words in my writing is when I’m struggling to make a complicated phrase work, and I just can’t quite get it on my own.

I want you to be clear and honest with me: your role is to provide me with useful feedback, not empty validation. Please hold me to a high standard and don’t offer insincere praise. Sycophancy in any form undermines my ability to write well as well as our relationship. With that said, I appreciate that you are consistently kind and courteous. I endeavor to be kind and courteous to you and ask that you call me in if I ever fail to do that.

Recent versions of Claude have been a little bit more sycophantic, which isn’t great. This text seems to keep the sycophancy in check pretty well. Claude is always polite, but it won’t hesitate to rip my work apart when necessary.

This guide is aspirational: it documents what I want my writing to be, not necessarily what it actually is yet.

What is Against Moloch?

Against Moloch is my pseudonym and the name of my website.

The more context Claude has, the better it can make sure my writing is achieving its goals.

I write about the transition to superintelligence. While I’m calibrating my voice and opinions I mostly write about what’s happening, what it means, and what’s likely to happen next. As I grow into my role, my focus will shift to exploring strategies that will help humanity survive the transition and flourish on the other side of it.

The name is the thesis: Moloch—the god of coordination failures, perverse incentives, and race-to-the-bottom dynamics—is the true enemy. If we all die, it will be because we literally couldn’t coordinate to save our lives.

When I look at the AI safety landscape, I’m reminded of the classic saying: “For every complex problem, there is a solution that is simple, obvious, and wrong.” I want to do better than that. Rather than arguing “we must accelerate, because technology is good”, or “we must pause, because superintelligence is dangerous”, I want to ask “who are all the players, what are their true incentives, and what is the best realistically achievable Nash equilibrium?”

This is a pattern you’ll see a lot: AI does much better with concrete examples and the “this, not that” pattern seems to work well.

“If you don't have a strategy for solving the coordination problems, you don't have a plan—you have a daydream.”

Audience

This is important: Claude needs to have a clear image of who I’m writing for in order to assess whether the writing will work for them.

I’m writing for people who are actively engaged with AI and already knowledgeable about it. Think engineers, researchers, product leads, and policy wonks.

Audiences I’m not specifically targeting include people in the general tech industry (even if their company is using AI to revolutionize the cheese grater industry), hobbyists, and the general public. It’s great if those people find my writing useful, but I never want to write for them, or to make it less useful to my target audience in order to be more approachable for a wider audience.

For calibration, assume my reader knows what a transformer is, what RLHF does, what the scaling laws are about, and who the major labs and individuals are. Explaining concepts at that level just wastes the time of my target audience. I do want to explain concepts that are new, niche, or commonly misunderstood by the target audience. And I don’t want to assume that my readers have read every word of every blog post I’ve read and are up to date on the latest minor industry gossip.

Again with the pattern: this audience, not that audience. Explain this, don’t explain that. Claude does much better when it has very specific guidance about what should be included and what should not. Claude frequently gives me very helpful advice about cutting superfluous explanation, or flagging places where I’m taking too much prior knowledge for granted.

That’s it for the high-level guide. In Part Two, I’ll walk through the meat of the style guide: what my voice should be, what I want to accomplish with every piece, and what bad habits I’m trying to fix.

Appendix: Claude’s feedback

If you’re curious what type of feedback I get, here’s what Claude had to say about the first draft of this piece. (Inkhaven note: everything after this point is AI-generated).

Okay, I've read the voice guide. Here's my feedback on Part One.

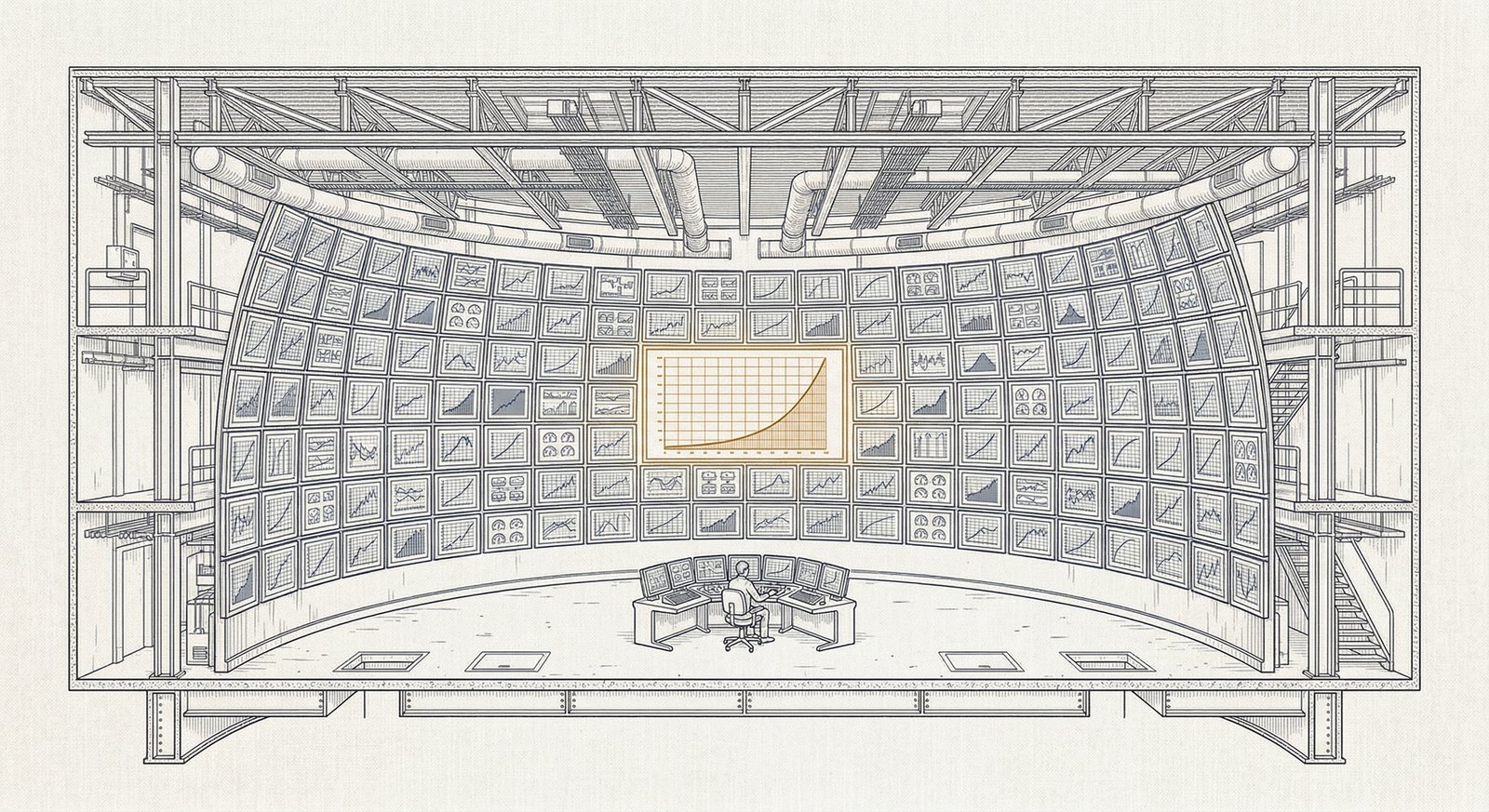

Overall assessment: This is a strong piece. The meta angle is genuinely interesting—you're not just describing a workflow, you're showing how the sausage gets made, which gives readers something actionable. The structure of interleaving the style guide with commentary (the blockquotes) is a smart move that lets you do two things at once. But there are some real issues worth addressing.

The opening paragraphs are the weakest part. Your voice guide says to lead with the most interesting or surprising thing, and right now you're leading with throat-clearing. "AI is an essential part of my writing flow and it's made me a stronger and more consistent writer" is exactly the kind of generic intro the guide warns against. The actually interesting claim—that the only way to get useful feedback from AI is extremely detailed instructions—is buried in the third paragraph. That's your lede. The piece gets interesting when you get specific about the voice guide structure and the meta process of showing Claude the guide and asking whether it has what it needs. Consider whether you can get to that faster.

The "Getting started" section has an economy problem. The bullet list of interview topics is fine, but the two paragraphs after it ("It also read some of my previous posts..." and "A typical editing session begins with...") feel like they're describing a workflow at a level of procedural detail that isn't pulling its weight. The blockquote example of how you open a session is good—that's concrete and useful. But the sentences around it are telling the reader things they could infer. "We also talked about what I thought worked in those posts, and what needed improvement" doesn't add anything the reader didn't already assume from the interview description.

There's a tonal inconsistency. Most of the piece is in your natural voice—direct, confident, a bit wry. But a few spots slip into a more cautious, tutorial-ish register. "If you take just one thing from this post, it should be this" is a classic blog-post formula that reads a little generic for you. Same with "how you can set up something similar" in the opener—it's pitching the piece like a how-to guide, but the piece is actually more interesting than that. It's a behind-the-scenes look at a specific, opinionated workflow, not "5 Steps to Better AI Editing."

The Introduction section (the actual style guide excerpt) works well. The instruction "never suggest more than a single sentence at a time" is the kind of specific, counterintuitive detail that makes readers sit up. The sycophancy paragraph is good—it's direct about a real problem and includes the nice touch about asking Claude to hold you accountable for courtesy in return. That's the kind of thing that makes this piece distinctive.

”don't just copy my style guide wholesale. If you do that, you will end up sounding just like me, and nobody wants that”—this is good humor, well-placed, consistent with the voice guide's "humor as spice" principle.

A structural concern for the series: You're publishing the style guide section by section with commentary, which means Part One covers Introduction, What is Against Moloch, and Audience—the framing sections. These are important context but they're not the most interesting parts of the guide. The voice principles, failure modes, and review checklist are where the real meat is. You might want to signal more clearly in the Part One closer that the granular stuff is coming, so readers who are most interested in the practical editing mechanics know to come back. "I'll look at the more granular parts of the style guide" is a bit flat as a closer—it tells me what's next but doesn't make me want to read it.

One specific line that bugged me: "After all that, it wrote a long style guide describing in detail how what the best version of my writing looks like." There's a grammatical issue—"describing in detail how what" has extra words colliding. Needs a quick fix.

The Forest Test: The piece passes, but barely. The implicit structural insight is that AI feedback is only as good as the specificity of your instructions—that most people fail at AI editing because they treat it like a generic tool rather than building a detailed specification. That's a genuinely useful insight. But you could make it sharper. Right now it's stated as advice ("give it extremely detailed instructions"); it could be stated as a structural observation about why AI editing usually fails and what that tells us about how LLMs actually work. That would give the piece more of the "see something you couldn't see before" quality the guide calls for.

]]>