Perverse Incentives, Part One

Nobody ever said game theory was easy

Inkhaven note: today’s post will eventually be part of a longer sequence. But this particular topic keeps coming up in conversation, so I want to get it down in writing sooner rather than later.

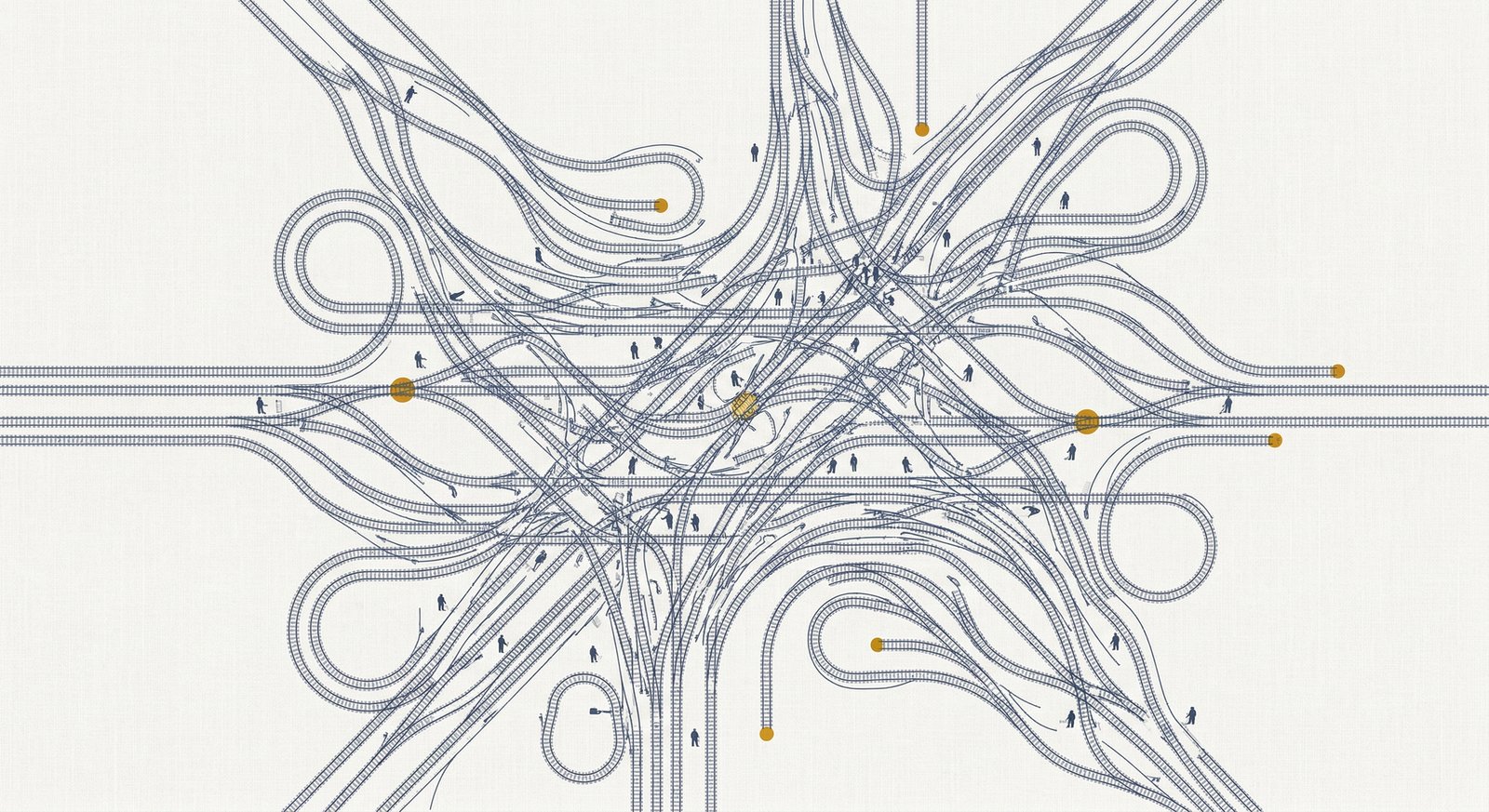

Many key decision makers have powerful incentives to favor rapid AI development even if that entails a significant risk of human extinction. Therefore, any pause strategy that relies on convincing those people that rapid AI development is dangerous is doomed to failure.

In the AI safety community, I see lots of good discussion of why a pause would be a good idea, how it might be implemented, and how to convince people that it would be a good idea. But I’d love to see more engagement with the game theory of AI politics. Achieving a useful pause requires overcoming some perverse incentives that don’t get enough attention.

The game theory won’t solve itself, so let’s talk about incentives: why on earth would anyone support rapid AGI development if they thought it might cause human extinction?

Who wants to live forever?

Assuming that AGI doesn’t kill us all, it’s likely to lead to rapid advances in medical technology. If you can manage to live until a few years after AGI, you have a good chance of getting access to medical technology that can greatly extend your life—which means you’ll live long enough to see even more powerful medical technology, and perhaps achieve medical immortality. Lifespans of 1,000 years or perhaps much longer are entirely plausible for anyone who makes it to that critical point.

This has critical implications for each of us as individuals. If AGI happens soon enough for you, you can have an extremely long—and extremely good—life. Nick Bostrom’s Optimal Timing for Superintelligence paper does the math and concludes that a completely selfish person should favor rapid AI development even if that entails a high risk of human extinction.

Conversely, a pause asks some people—perhaps many people—to sacrifice a significant amount of expected lifespan for the common good. Is that a realistic ask? It might be: in the abstract, I expect most people would say that it’s more important to avoid human extinction than to ensure that they personally get to live for 1,000 years.

But it’s also true that faced with imminent mortality, people don’t want to die.

1. Your current age is critical

The incentives here depend strongly on your current age. If you’re 25, it’s easy to bravely declare that you’re willing to die of old age to keep humanity safe from rogue AI.

But if you’re 70 years old and starting to grapple with your own imminent mortality, the tradeoff feels different. A pause of a decade or two is effectively a death sentence—now seems like a great time for some motivated reasoning.

2. Guess who’s very old?

I bring this up because if you believe in short timelines, two of the most important people in AI policy are Donald Trump (age 79) and Xi Jinping (age 72). I leave as an exercise for the reader the question of whether those two individuals are likely to altruistically sacrifice their own lives for the common good.

3. The enemy also gets a vote

A fundamental principle of game theory is that a winning strategy has to work even if your opponent makes an optimal move to counter you. Here’s an obvious opposing move: if I were an accelerationist, I would make it my business to ensure that the leader of my country understood that his life depended on AI development proceeding at maximum speed.