Mythos Radar

An excerpt from tomorrow’s newsletter

Today’s Inkhaven post is a preview of the Mythos content from tomorrow’s newsletter.

This week’s big story is the limited release of Claude Mythos Preview. The headline is that Mythos is alarmingly good at cybersecurity, with the ability to find and exploit critical vulnerabilities en masse. Anthropic is handling that as responsibly as one could hope for, but the next year or two will be challenging for security. If you haven’t already, is be a good time to review and improve your personal security practices.

Cybersecurity isn’t the only story here: Mythos is probably the first of the next generation of much larger models. Early data suggest it represents another acceleration of the rate of capability progress, although that’s hard to assess while it’s still in limited release. And from a safety perspective, Anthropic says this is both the most aligned model they’ve ever created and also the most dangerous.

Top pick

How scary is Claude Mythos?

Rob Wiblin’s analysis of Mythos covers all the key points. If you only read this piece, you won’t miss anything vital.

Mythos Preview is another milestone on the race to AGI. In retrospect, I suspect it’ll seem as significant as the November 2025 release of Opus 4.5 that kicked off the agentic coding craze. Rob covers both sides of this story: Mythos is perhaps the first model powerful enough to cause a major crisis if misused, and it’s also (as far as we can tell) considerably better aligned than any previous Anthropic model.

I expect there will be strong disagreement about how those two factors balance out. Some people will see Mythos as evidence that we are rushing toward AGI without having solved AGI, and others will argue that alignment is progressing as fast as capabilities and we’ll probably manage to muddle through. I believe those aren’t mutually exclusive: we are rushing toward AGI with an alignment strategy that is probably good enough to muddle through with, but which has a real chance of getting us all killed.

Mythos is evidence for short timelines, bringing a big step forward for capabilities that is at least consistent with past trendlines and might represent an inflection point toward even faster progress.

Mythos

All of the following pieces are good, but most of you can just read the summaries and pick and choose which links to follow.

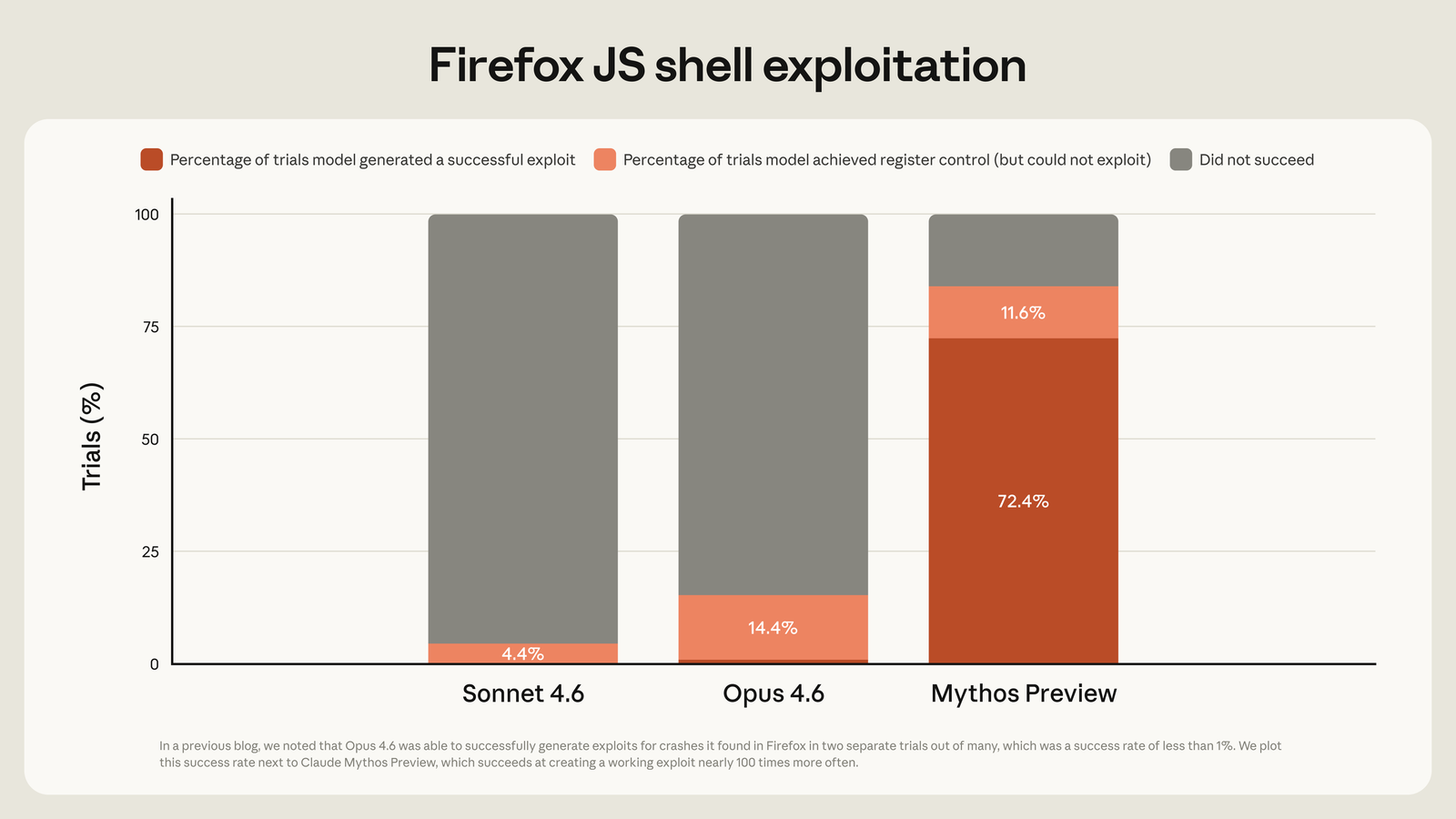

Mythos Preview’s cybersecurity capabilities

Mythos is better at finding and exploiting vulnerabilities than any past model:

Anthropic’s analysis is spot on:

There’s no denying that this is going to be a difficult time. While we hope that some of the suggestions above will be helpful in navigating this transition, we believe the capabilities that future language models bring will ultimately require a much broader, ground-up reimagining of computer security as a field.

As part of that reimagining, Anthropic is giving key companies a head start in the cybersecurity arms race via Project Glasswing. This seems like the best path forward, which doesn’t mean it’s guaranteed to succeed.

Ryan Greenblatt makes some informed guesses

Ryan Greenblatt estimates the potential impact of Mythos:

If Mythos was released as an open weight model in February (or tomorrow), this would cause ~100s of billions in damages, with a substantial chance of ~$1 trillion in damages

He also estimates that inside Anthropic, Mythos might make individual engineers 1.75x as productive as they would otherwise be, with a 1.2x overall acceleration of AI R&D. The difference between those two numbers is a reminder that many factors go into moving R&D forward. Recursive self improvement requires more than just a model that’s good at writing code.

The Zvi report

Zvi does a two part deep dive, covering the system card and the cybersecurity implications. Excellent, comprehensive, long.

New sages unrivalled

Dean Balls argues that Mythos marks a new era for AI. I agree, but I don’t have to like it.

I wrote on X that Mythos means the training wheels are coming off on AI policy. Perhaps the Department of War’s effort to strangle Anthropic is, to use another metaphor, a sign that the gloves are off too. If the last month has made anything clear, it is that we are in a nastier, sharper, harsher, meaner era of AI discourse, policy, and—ultimately—of AI development and use.

Hot take: failing to fully appreciate and plan for this new era is likely the biggest unforced error the AI safety community will make over the next couple of years. Much more than previously, many key players will be motivated by ruthless self interest rather than an altruistic desire to do what is best for humanity. We need to fully accept that fact and plan accordingly.