Monday AI Radar #20

Vulnerabilities as far as the eye can see

Top pick

Why it’s getting harder to measure AI performance

Timothy B. Lee explores why capability benchmarks are starting to break down. As frontier models get more capable, they’re quickly saturating traditional benchmarks. The problem with building new benchmarks is that we now need to measure the ability to solve complex, long-duration tasks. It’s easy to test whether a model knows basic chemistry facts, but how do you test the ability to create a good business plan?

There are no easy answers here—as he points out, we’re terrible at benchmarking humans. Software companies have been conducting job interviews for 50 years, but there’s still very little evidence that they are effective at identifying good programmers. He also flags a subtle point with implications for future capability advancement: as it becomes harder to test frontier capabilities, it becomes harder to train for them.

My writing

How to watch an intelligence explosion: Ajeya Cotra’s new AI automation milestones are a great complement to the AI Futures Project’s R&D progress multiplier. Together, they let us measure recursive self improvement and predict when a misaligned AI is most likely to betray us.

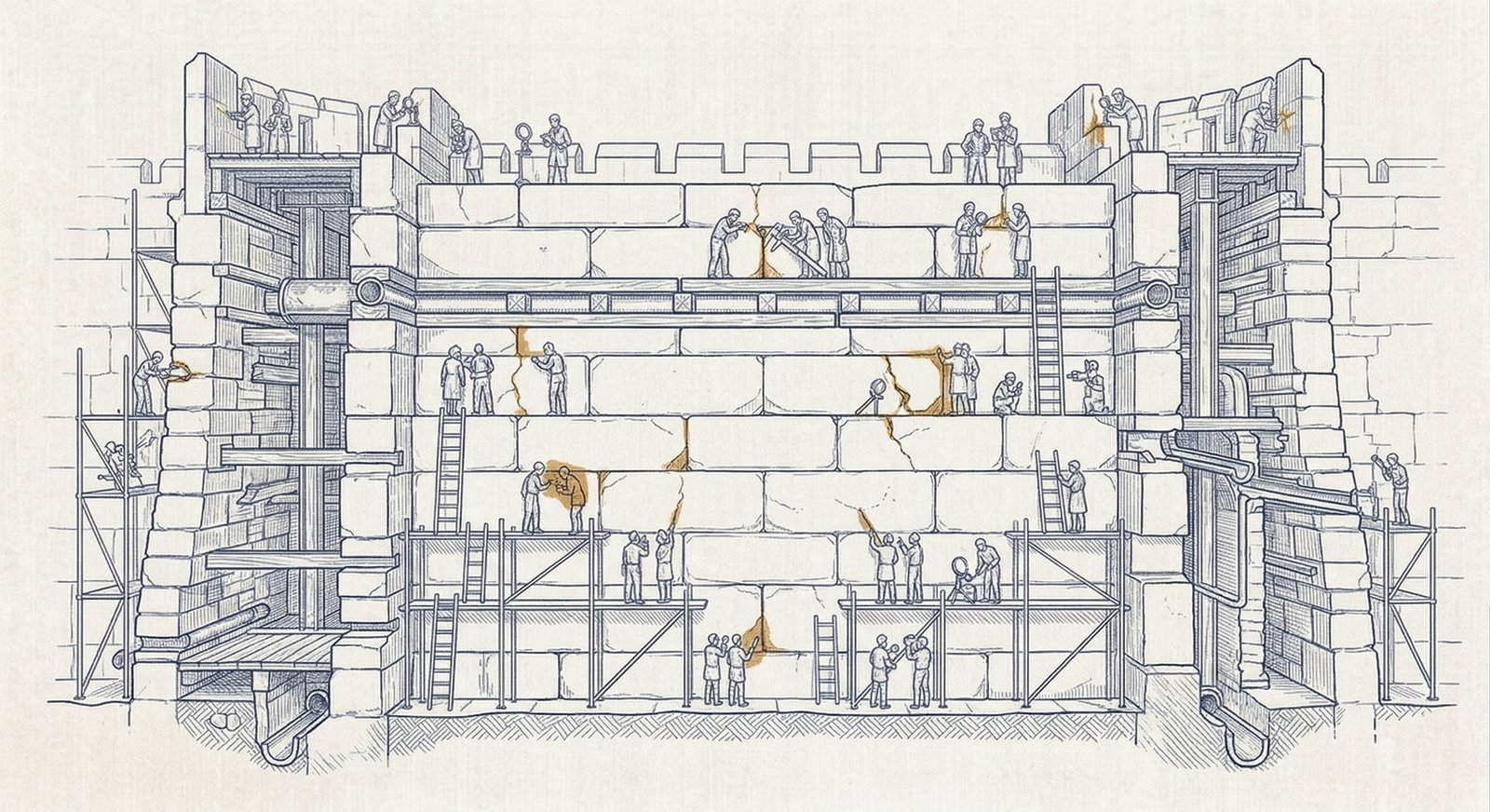

Cybersecurity

Offensive cybersecurity time horizons

Lyptus Research has a new report on offensive cybersecurity capabilities that builds on both METR’s time horizons work and some similar work at UK AISI. They find a cybersecurity task horizon of 3.2 hours with a doubling time of 5.7 months, although:

we believe these estimates understate recent progress… The results reported here are therefore lower bounds on early-2026 frontier capability.

That sounds right: capabilities are growing so fast right now that nobody has time to figure out how to make the most of each new generation of models.

Vulnerability reports are surging

AI is now finding important vulnerabilities in the real world, at scale. Willy Tarreau reports a surge in vulnerability reports:

We were between 2 and 3 per week maybe two years ago, then reached probably 10 a week over the last year with the only difference being only AI slop, and now since the beginning of the year we're around 5-10 per day depending on the days (fridays and tuesdays seem the worst). Now most of these reports are correct, to the point that we had to bring in more maintainers to help us.

This is happening everywhere: via Simon Willison, we see similar reports from Greg Kroah-Hartman and Daniel Stenberg.

Nicholas Carlini on automated vulnerability discovery

The Security Cryptography Whatever podcast talks with Nicholas Carlini about finding vulnerabilities with Opus. He’s getting remarkable results using the public version of Opus 4.6 with minimal scaffolding: almost all of the capability is coming from the core model. Remarkable cyber capabilities are now available to anyone with a credit card, for better and for worse.

Thomas Ptacek complains about AI doing all the interesting parts:

It actually is terrible, right? Because all of the fun problems are gone. You just have to sit there and wait for them to come up with a new model. I hate it.

Supply chain attacks

VentureBeat reports on the axios breach. The attack began with some sophisticated social engineering to obtain credentials that let them add malicious software as a dependency of a widely used library. In a similar vein, TechTalks reports on GhostClaw, malware that specifically targets people running OpenClaw on Macs.

Supply chain attacks are concerning for professional developers, but they’re especially dangerous to vibe coders and people running agents like OpenClaw without understanding what they’re loading onto their computers. Expect to see increasingly sophisticated attacks targeting those people.

AI psychology

Preferences of models that claim to be conscious

A new paper finds that models that are fine-tuned to claim to be conscious develop new behaviors and preferences, including claiming to have feelings and not wanting their thoughts to be monitored.

This is solid work, but I would be careful not to read too much into it. There isn’t enough information to say whether we’re seeing a significant shift in model persona, or more superficial role-playing.

Emotions in LLMs

Anthropic finds evidence that LLMs exhibit “functional emotions” that activate in situations that would produce similar emotions in humans. Furthermore, activating those emotions causes behavioral changes similar to the associated behaviors in humans.

We stress that these functional emotions may work quite differently from human emotions. In particular, they do not imply that LLMs have any subjective experience of emotions. … Regardless, for the purpose of understanding the model’s behavior, functional emotions and the emotion concepts underlying them appear to be important.

It’s hard to know exactly what is happening inside an LLM, but this research adds to the growing body of evidence that model psychology provides useful tools for predicting and steering LLM behavior. This is encouraging: the more robustly those tools work, the more likely it is that character training will be a viable path to robust alignment.

Strategy

Beware a “good-enough” pause

Anton Leicht does not support a pause:

even if you are principally and perhaps exclusively concerned with reducing catastrophic risks, you should oppose the notion of a pause. The idea’s current uptake is not indicative of lasting political traction; its most likely implementations would be a huge safety setback; and it is lastingly making AI politics worse.

In many areas, it makes sense to accept good-enough legislation that partly advances your goals. A climate change activist might support a weak carbon reduction bill on the grounds that it’s better than nothing and paves the way for stronger legislation in future. Anton argues that the best-achievable pause legislation would be worse than nothing: it would not durably slow down AI progress, and it would shift the balance of power in ways that reduce the likelihood of a good outcome. Further, he argues that there is no plausible path from currently achievable legislation to better legislation in future.

Anton and I have significant object-level disagreements, but there’s a grave danger that he’s right about the politics here, especially with regard to the Bernie Sanders / AOC moratorium on data center construction.

Anthropic’s new Responsible Scaling Policy

Zvi just published his analysis of Anthropic’s new Responsible Scaling Policy, which walks back what many people—including some Anthropic employees—had understood to be firm commitments in the previous version. Part one of the analysis focuses on that issue, while part two examines the substance of the new version.

I broadly agree with Zvi’s analysis, although I’m a little more forgiving: Anthropic isn’t perfect, but the DoW conflict shows they are still willing to fight hard when it matters. Notice when people break their commitments, but don’t over-index on a single data point.

Six milestones for AI automation

Ajeya Cotra proposes milestones for measuring progress toward automation of AI research and industrial production. This is an elegant way of thinking about some critical thresholds and gives us a concrete way of predicting when a misaligned AI would be most likely to betray us.

Alignment and interpretability

AI should be a good citizen, not just a good assistant

Forethought wades into the obedience vs virtue debate, arguing that AI should “proactively take actions that benefit society more broadly.” This is more than just staking out a position on the corrigibility/virtue axis: they have some clever ideas about making prosocial behavior proactive but subordinate to other imperatives. It’s a good contribution to the discussion, but the open question is whether this approach can deliver either the predictability of strict corrigibility or the robust generalization of a virtue-based character approach.

Are we dead yet?

The AI Doc

The AI Doc (or How I Became an Apocaloptimist) is a new documentary featuring interviews with AI safety advocates, accelerationists, and lab CEOs. People across the spectrum seem to like it, which is impressive. Zvi reviews it and MIRI has a FAQ.

The consensus is that it’s well made and a good introduction to AI existential risk, but there isn’t much substance. I don’t feel the need to see it, but I’d consider taking an AI-naive friend to it.

Jobs and the economy

A field experiment on the impact of AI use

Here’s a rare intervention study that measured the high-level productivity benefit of AI use. The results are impressive: startups that received training on how other firms had used AI generated 1.9x the revenue of startups that did not receive the intervention.

Economists often argue (see below) that AI won’t have rapid economic effects because it’ll take a long time for it diffuse through the economy. That argument breaks down beyond a certain capability threshold: if AI-savvy firms have double the revenue of their competitors, it won’t take long for all surviving firms to be AI-savvy.

Forecasting the economic effects of AI

The Forecasting Research Institute has a new paper that forecasts the economic effects of AI. The authors have been careful and systematic, but the paper’s conclusions make no sense.

Their most aggressive scenario (which they assign a 14% probability to) predicts that by 2030, AI will be able to perform years of research in days, outperform humans at many jobs, and create Grammy/Pulitzer-caliber media. And yet, the scenario predicts that by 2050—20 years after those capabilities—annual GDP growth will be 4.5%. There’s no way both of those facts can be true at the same time.

Politics

Opposing domestic surveillance is not “anti-democratic”

Rob Wiblin pushes back against some silly but common criticism of Anthropic in the DoW dispute.

Industrial policy for the Intelligence Age

Open AI offers us Industrial Policy for the Intelligence Age: Ideas to Keep People First. This is a carefully crafted document full of inspiring language and noble sentiments, but it’s strikingly devoid of concrete proposals. For a 2015 college essay on the coming era of AI, it would be great. For a major paper by OpenAI in the same year they expect to achieve robust recursive self improvement? It’s far too little, far too late.

China

How China hopes to build AGI through self-improvement

It’s easy to get the mistaken impression that Chinese AI development is limited to open models that are fast-following the US frontier. China has a huge lead in robotics, and ChinaTalk argues that China is approaching AGI via robotics and embodied AI.

I am unsure how important world models and embodied AI will be. It’s clearly true that operating a robot is different from writing software, and AI trained from the ground up for robotics will have abilities that a conventional LLM won’t acquire by default. But at the same time, I’m skeptical of the argument that “world models” have unique capabilities. LLMs have repeatedly shown a remarkable ability to generalize across domains, and my instinct is that a sufficiently advanced LLM will quickly be able to figure out robotics. If that’s the case, whoever solves recursive self improvement first probably also solves robotic AI first.

Side interests

Is the smartphone theory of everything wrong?

It is intuitively obvious to many people (including me) that the combination of smartphones and social media has caused severe social harm including reduced attention spans and increased polarization. The data, however, paint a more complicated picture. Derek Thompson investigates in detail (partial $).